Why Machine Learning needs GPUs?

What is Machine Learning and why does GPUs relate to Machine Learning? This article will answer questions for you and explain why Machine Learning requires GPUs.

Machine Learning (ML) is an application of artificial intelligence (AI). Now there is no exact definition for Machine Learning, but it can be understandable that ML provides systems the ability to automatically learn and improve from experience without being explicitly programmed to solve various problems. Machine learning algorithms focus on the development of computer programs that can access data and use it to learn for themselves. (source: expertsystem.com)

So, why GPUs are necessary for training Machine Learning models? Because en Machine learning is about solving advanced mathematics problems, related to statistical math. Complex equations with a large number of formulas, this Machine Learning Technology will analyze and solve based on a bunch of thousands of initial data, then optimize them to give us accurate and reliable predictions.

The optimized equation is what’s normally called a model. The model can simulate the relationship between data and predictions. The hard math in Machine Learning is how to come up with the model and how to find out the importance of each of those different observations are relative to the other ones.

Obviously, we are not talking about dealing with a matrix. In Machine Learning, what we have to do is crunching together big matrices of numbers, instead of a single one. Humans know that what happens in GPU (Graphics Processing Units) is somehow the same as the way they deal with Machine Learning models where the matrices represent pixels. It is clear to say that computing graphics is about doing computations across big matrices of pixel data. It is the reason why Machine Learning needs GPUs: doing computations across big matrices.

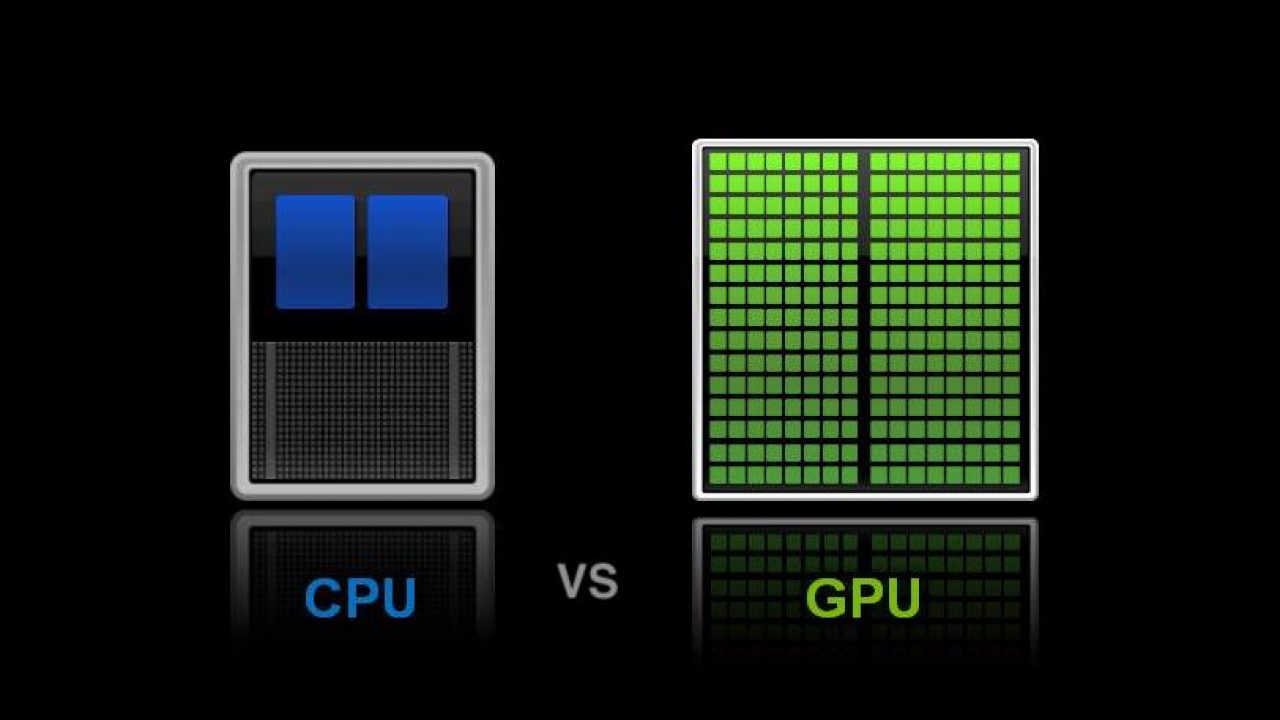

What is the difference between doing computations on GPU and CPU? We know that in CPU (Central Processing Units), things happen sequentially: one computation has to wait for another to complete because it depends on the result of the earlier computation. However, it is quite different when it comes to GPU. With GPU, the key thing is parallel computations. For instance, with the advanced math problems of A + B + C → D. In the CPU, before having the result of D, we need to calculate A, then B and finish with C. But with the GPU, we can calculate A, B, and C at the same time, and return D immediately. It can be said that more cores can not help increase the computing power of the CPU because they still have to do computations sequentially, but adding hundreds of processor cores within a GPU can wind up hundreds of times more powerful.

Therefore, investing in GPUs is an extremely correct and effective strategy in the future because engineering problems will be easier to solve within GPU than the CPU. The GPUs are designed to implement small but very fast tasks on a large scale and parallelism can help performance instead of conventional CPUs. As machine learning technology grows and more computing power is needed, the importance of GPUs will become more and more clear.

To help users to build Machine Learning models faster and more accurate, iRender is developing and planning to release GPUHub – AI service which is a computer rental service with powerful GPU and CPU configuration. We offer 1-6 cards x GTX 1080 Ti and 1-6 cards x RTX 2080 Ti, speeding up Training set, Cross validation set, and Test set. If any help, contact us via this link our 24/7 technical support is always available – just a click iRender makes every effort to support our customers, thanks to our experts, software development teams, and technical directors available 24 hours, 7 days a week, even during major holidays.