Some effective tools Data Scientists need to know

Data Science is a fast-moving industry which develops in a different speed than other areas. It will constantly evolve and keep moving, and you don’t want to be left behind lag.

Foundationally, the skills required to be a data scientist remain the same: statistics, Python/R programming, SQL or NoSQL knowledge, PyTorch/TensorFlow and data visualization. However, the tools data scientists use constantly change. In this article, we will share best basic tools for academic data scientists—but also for early career data scientists and even non-programmers looking to employ data science techniques into their workflow.

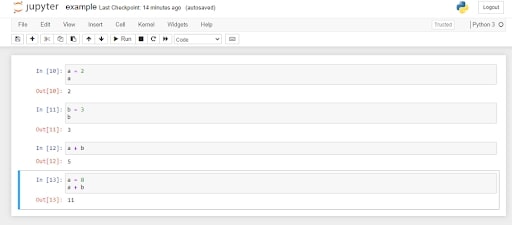

1. IDEs: Jupyter Notebooks / PyCharm / Visual Studio Code

It’s very essential to use the right IDE (Integrated Development Environment) for developing your project. These tools are well known and and bring benefits to programmers, data science hobbyists and non-expert programmers. Although academia falls short in implementing Jupyter Notebooks, academic research projects offer some of the best scenarios for implementing notebooks to optimize knowledge transfer management.

Besides Jupyter Notebooks, tools like PyCharm and Visual Studio Code are standard for Python Development. PyCharm is one of the most popular Python IDEs. It’s compatible with Linux, macOS and Windows and comes with many modules, packages and tools to enhance the Python development experience. PyCharm also has great intelligent code features. Finally, both Pycharm and Visual Studio Code offer great integration with Git tools for version control.

2. Anaconda

Anaconda is a great solution for implementing virtual environments, which is particularly useful if you need to replicate someone else’s code. This isn’t as good as using containers, but if you want to keep things simple then it is still a good step in the right direction.

For example, you try to make a requirement.txt file where you include all the packages used in your code. And at the same time, you start with a clean slate when you are about to implement someone else’s code. It only takes two lines of code to start a virtual environment with Anaconda and install all required packages from the requirement folder. If after doing that, you can’t implement the code you are working with, then it’s often someone else’s mistake. You should not try to figure out what’s gone wrong.

Dr. Soumaya Mauthoor also compares Anaconda with pipenv for creating Python virtual environments. And you can see in the article, there’s an advantage to implementing Anaconda.

3. iRender - GPU Cloud for Training and Transferring Machine Learning Models

There are plenty of options for machine learning as a service (MLaaS) to train models on the cloud, such as Amazon SageMaker, Microsoft Azure ML Studio, IBM Watson ML Model Builder and Google Cloud AutoML. They are big companies which provide MLaaS for a long time and have many experience.

However, those services are often difficult for beginners to learn how to use. The prices calculation is also complicated with many hidden costs that you will need to courses to know how it works, how to avoid spending thousand of dollars.

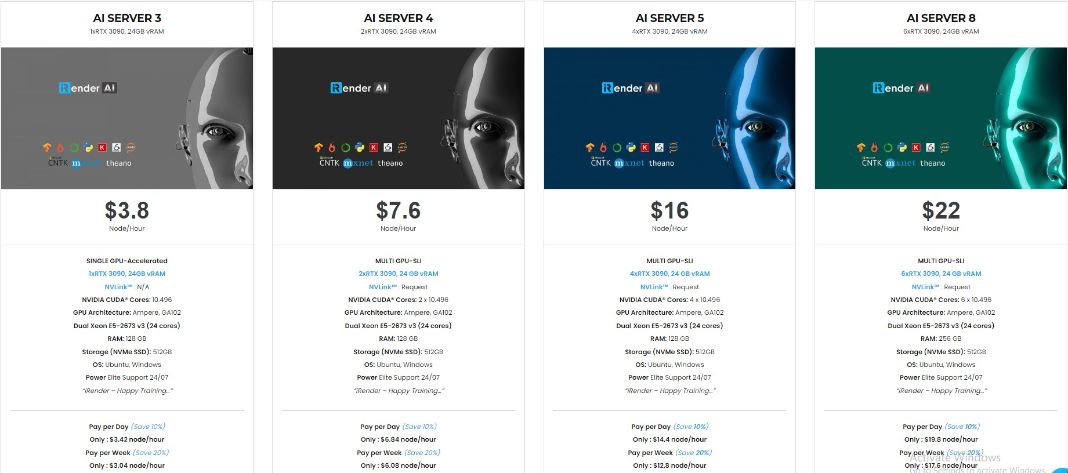

At iRender, we provide multiple GPUs for renting with state-of-the-art RTX3090. Our remote machines are Optimized for Scientific Computing, Machine Learning, Deep Learning.

We support not only Python, but also all AI IDEs & Libraries such as: TensorFlow, Jupyter, Anaconda, MXNet, PyTorch, Keras, CNTK, Caffe and so on.

This is our packages tailored for AI/ Deep Learning:

Plus, at iRender, we provide you more support than just those config.

NVLink available for better performance

If 24GB VRam is not enough for your project, we always have NVLink to help you access more than that. You can access this article to know how to set up NVLink on our machine.

Free and convenient transferring tool

iRender offers a powerful and free file transfer tool: Gpuhub Sync. With fast file transfer speed, large data capacity, and completely free. You can transfer all the necessary data into our Gpuhub Sync tool at any time without connecting to the server. The data will be automatically synchronized in the Z drive inside the server, ready for you to use.

Flexible price

Besides hourly rental, you can always save from 10% to 20% with our Fixed Rental feature. For those who’s in need of server more than a day, or have extremely large project, we advise to choose daily/ weekly/monthly rental package. The discount is attractive (up to 10% for daily packages, 20% on weekly and monthly packages), and you don’t have to worry about over-charging if you forget to shutdown the server.

Real human 24/7 support service

Users can access to our web-based online platform and using multiple nodes to render at the same time. Hence, with us, it does not matter where you are present – as long as you are connected to the internet, you can access and enjoy the 24/7 rendering services that we provide, and if you meet any issue, our real human 24/7 support team is always ready to support you.

Conclusion

Although many industry data scientists already make use of the tools above, academic data scientists tend to lag behind. Anaconda, Jupyter Notebooks, PyCharm and Visual Studio Code are all free/open-source tools to consider if you work in data science.

Ultimately, these tools can help any academic or novice data scientist optimize their workflow and become aligned with industry best practices.

Register an account today to experience our service. Or contact us via WhatsApp: (+84) 916806116 for advice and support.

Thank you & Happy Training!

Source: builtin.com

Related Posts

The latest creative news from Cloud Computing for AI,