The risks of AI in 2022

AI – Artificial Intelligence has been increasingly developing in our modern life, with many applications help change the world. However, some, if not many people, think that it’s very dangerous. The rapid grow of AI, if is used in the wrong way, will cause serious impacts.

There are many theories about Destructive superintelligence – artificial general intelligence that’s created by humans and escapes our control to wreak havoc. It could happen or not happen. Right now we are still in the very early stages of artificial intelligence, so let’s talk about some of the ways it warns us about current and near future of pitfalls – The risks of AI.

Job automation

Job automation could be the most immediate concern now because it has partially replaced human in some certain types of jobs. For example, if you works in a job that performs predictable and repetitive tasks, there’s high risk that it will be done using AI. There are many reports from Brookings Institution show that AI will soon replace 70% of tasks like retails sales, market analysis, hospitality, warehouse labour, and even white-collar jobs.

It’s not only the repetitive jobs that is slowly replaced by AI, but also professions that require graduate degrees and additional post-college training aren’t immune to AI displacement. John C. Havens, author of Heartificial Intelligence: Embracing Humanity and Maximizing Machines, has interviewed one law firm about machine learning. He found out a case where the head of the firm could replace 10 people (with salary $100,000 each) by a software which costs only $200,000. The software saves him money, and moreover it helps increase productivity by 70 percent and eradicate roughly 95 percent of errors. What would be his selection? It’s easy to see.

Technology strategist Chris Messina said that accounting will be the next one who is at risk. One human auditor can read through a lot of information then make decision based on that. Once AI is able to comb through thousand of data and quickly make a deliver, we can see the unnecessity of human auditors.

Since good data available in these cases is abundant, algorithms are becoming just as good at diagnostics as the experts. The difference is: the algorithm can draw conclusions in a fraction of a second, and it can be reproduced inexpensively all over the world. Soon everyone, everywhere could have access to the same quality of top expert in radiology diagnostics, and for a low price.

Privacy, Security and the rise of “Deepfakes”

Job loss is one of many potential risks of AI. We will talk about another issue which is slowly emerging right now – privacy and security.

On February 2018, 26 researchers from 14 institutions addressed a paper titled “The Malicious Use of Artificial Intelligence: Forecasting, Prevention, and Mitigation“. In there, they discussed that if AI is not strictly and ethically controlled, it could threaten digital security, physical security and political security.

A prime example is China’s “Orwellian” use of facial recognition technology in offices, schools and other venues. What will happen if bad actors exploit this AI-analyzed monitoring?

The concern goes even higher when we see the rise of so-called audio and video deepfakes. It’s created by manipulating voices and likenesses, making waves across the world. For example, one can make a video or an audio clip of any politician spouted racist or sexist views when in fact they do not. If the clip is quality enough to fool the general public and avoid detection, it could be a huge issue. We cannot know what is real what is fake, and cannot rely on anything. That is a disaster.

AI bias and widening socioeconomic inequality

AI is developed by humans, and humans are inherently biased, therefore AI could be biased too. According to Princeton computer science professor Olga Russakovsky, AI researchers are primarily male, “who come from certain racial demographics, who grew up in high socioeconomic areas, primarily people without disabilities.” So it’s hard to think broadly about world issues. They can try to understand the social dynamics of the world, but they are humans and can’t avoid the fact that they have bias.

Autonomous weapons and a potential AI arms race

Elon Musk once said that AI is more dangerous than nukes. Some would not agree with him, but around 30,000 AI/robotics researchers and others certainly think so. Why? There are two reasons.

The first one is what if AI can decide its own action to achieve its goal. And what if its decision is to launch nukes or any other weapons of mass destruction without human intervention. The result is disastrous.

The second one is AI can be manipulated by terrorists or dictators. The researchers wrote that “Autonomous weapons are ideal for tasks such as assassinations, destabilizing nations, subduing populations and selectively killing a particular ethnic group.” This would cause unnecessary AI arms race.

Mitigating the risk of AI

It is really hard to mitigate the risks of AI, as one can develop it safely, but others can still use it in a wrong way. Different people will make different choices. However, we will need at least a public body who can regulate how AI is used, but doesn’t hold back the progress of basic technology.

Final thought

Through time, we can see AI as revolutionary or world-changing, but it does contain some drawbacks and all the risks of AI in 2022. They could be very damaging and dangerous, and we will need to be really careful when developing and using them.

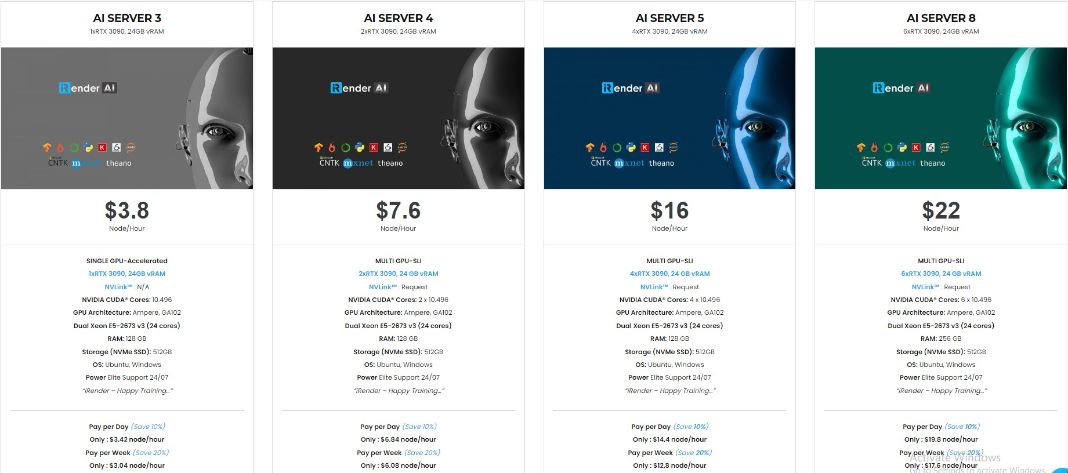

iRender is currently providing GPU Cloud for AI/DL service so that users can train their models. With our high configuration and performance machines (RTX3090), you can install any software you need for your demands. With just a few clicks, you are able to get access to our machine and take full control of it. Your model training will speed up times faster.

Moreover than that, we provide other features like NVLink if you need more VRAM, Gpuhub Sync to transfer and sync files faster, Fixed Rental feature to save credits from 10-20% compared to hourly rental (10% for daily rental, 20% for weekly and monthly rental).

Register an account today to experience our service. Or contact us via WhatsApp: (+84) 916806116 for advice and support.

Thank you & Happy Training!

Source: builtin.com

Related Posts

The latest creative news from Cloud Computing for AI,