Why does Machine Learning need the GPU?

Why do so many chip manufacturers like Nvidia and AMD choose GPUs to invest in Machine Learning, instead of CPUs? Come to the article below to find out why.

Nvidia used to hold a conference on games, but the main topic everyone discussed during that conference was GPU for Machine Learning technology. Not only Nvidia, but manufacturer AMD also introduced a new line of GPUs targeting machine learning at CES 2018. Machine Learning is a concept in the field of artificial intelligence to research and build techniques. magic allows the computer to “learn” automatically from available data to solve various problems. It sounds irrelevant, but why is Nvidia to AMD jumping into GPU research for machine learning. Why didn’t they choose the CPU? The answer is because of the statistical matrix.

Machine Learning is about solving problems related to math and statistical matrices. Complex equations with a lot of formulas, this machine learning technology analyzes and solves large amounts of initial data, then optimizes them to give us accurate and reliable predictions.

Optimized equations are often referred to as models. Models are a way to simulate relationships between data and make predictions. The problem with math in Machine Learning is how to figure out a model or understand the importance of one data over another.

Machine learning does not use a single statistical matrix. For best performance, we must combine multiple statistical matrices, instead of single matrices. Computing and combining large numbers of models are extremely complex. So people have come up with a way to implement them on GPU (Graphics Processing Units) which are graphics processors, where each matrix is treated as a pixel (pixels). Multi-matrix computation is the main reason Machine Learning needs a GPU.

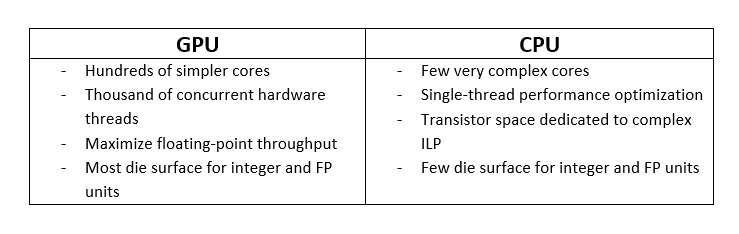

Check out the following comparison information between GPU and CPU:

Through the above comparison, it shows that investing in GPU is a very correct and effective step in the future because technical problems in the computation limit will be easier to solve than CPU. The GPU is designed to do small but very fast tasks, be efficient at a large scale, and happen simultaneously, at the same time. As machine learning evolves and more computing power is needed, the importance of the GPU will increasingly become evident.

To help users solve the machine learning problem in a simpler and smoother way, iRender offers you a high-configuration GPU rental service. We offer a pack of 1-6 cards x RTX 2080Ti, RTX 3090, and a pack of 6 cards x RTX 3080, which makes the Training set, Cross-validation set, and Test set faster and smoother processing. If you need help, our 24/7 live consulting and technical support team is on hand – just a click away. iRender has done its best to have expert admin, software development team, experienced supervisors, and technical directors available 24 hours, 7 days a week, even during big holidays.