Should you use NVLink or SLI or multiple GPUs for rendering?

Multi-GPU executions are getting more popular than ever, because of the growing complexity of computational tasks. This leads to the trend of scalable GPU computing. Especially in 3D Rendering, where you want to achieve faster render times than anything else. To respond to this trend, there are technologies that help you to connect two or more GPUs and scale up the performance, like SLI or NVLink. However, can you expect the perfect help from them, or do they contain downsides? Let’s discover those questions with iRender.

What is SLI?

SLI stands for Scalable Link Interface. It’s first released by NVIDIA in 2004 (before that the company 3dfx – later bought by NVIDIA, had introduced it to the world in 1998), with the aim to improve the performance of many GeForce GPUs when they work together.

This technology allows you to use up to four cards at once. It works in a hierarchy relationship, with parallel processing algorithm, where one GPU will be the master, and the other(s) will be slave(s). The master card will take the information, divide it into smaller pieces, govern and direct the workload of the other(s). Now, the work will be processed at once by multiple cards.

When it’s the hierarchy relationship, It means that if you have two 11GB cards, you will not have a total of 22 GB VRAM, but only 11 GB of the master card.

What is NVLink?

NVLink is a more advanced form of SLI. It is used to connect multiple GPUs so that they can work at once. However, it’s a new technology, so it will have differences compared to SLI.

First of all, NVLink can connect two cards only for consumer space, and up to 16 enterprise cards for enterprise space. The number of GPUs that can be connected are more than SLI.

Second, you can expect more bandwidth with NVLink. While SLI bridges can only achieve 2 GB/s, NVLink bridge can help you with a speed of 200GB/s. So NVLink solves the information transfer speed bottleneck of SLI.

An important advance of NVLink compared to SLI is that NVLink does not work in a master-slave relationship. It works based on mesh networking, where the communication is bi-directional and each card can access the memory of the other.

How is it better than SLI’s master-slave relationship? With SLI, you have a master card who will spend most of its computational power to collect and direct data from other cards. So of course, you cannot get the full power of the master card for rendering. With NVLink, you have each GPU work independently from one another. Each GPU can communicate with each other and with the CPU directly without having to send information to a master.

While the combination of 2 cards using SLI cannot achieve the double VRAM, it’s safe to say that with NVLink, you will get the double. NVLink allows you to pool your VRAM, making it a choice for complicated calculation.

Pros and Cons of SLI and NVLink

The basic concept of SLI and NVLink is to make 2 or more GPUs work together and boost the performance of them. In reality, those two have helped multiple GPUs work and communicate with each other. And of course working with more GPUs is better than one, and working with multi GPUs where they can talk and complete one job together is better than if they just do it separately.

However, for most cases, is it really worth it? A misconception about SLI and NVLink is that you can get double, triple, or even quadruple speed or video RAM with more graphics cards. Unfortunately, it’s not that ideal. Having your GPUs connected with each other using SLI or NVLink bridges means that you have more potential complications with heat and bugs.

With SLI, you cannot access more VRAM than the master GPUs, making it impossible to work with a large and complicated task. NVLink, while can only help you to access more VRAM, bandwidth, and that’s it. It will be a savior if your scene is too complex and the available VRAM of one card is not enough. But for the simple scene that can take advantage of just one card, why add more hardware and force your PC to distribute its resources to serve it?

Should you use NVLink or SLI or multiple GPUs for rendering?

It actually depends on your needs and on your software. Let’s talk about some rendering engines that can run multi-GPUs like Octane, Redshift, Arnold GPU,V-Ray, Cycles, etc.

Some software supports SLI or NVLink, then you can use them. Some don’t support those technologies, therefore you cannot use them. Some of them are recommended to use with SLI, some don’t. For example, Arnold and Vray’s developers suggest enabling SLI, while Octane, Redshift and Cycles developers don’t suggest that. With NVLink, it’s about the complexity of your scene.

First of all, do you really need SLI or NVLink to be able to render multi-GPUs? The answer is no. Organically and naturally, all multiple GPUs rendering software can run multiple GPUs separately, without having any technologies to connect them. When producing the final renders, the rendering engine often prefers bucket rendering, which means that the image is rendered in smaller areas, called buckets. The number of buckets is determined by the number of GPUs you have in your systems.

You can see in the picture below, it’s the process of final render, with a big image consisting of many buckets, each bucket is a small square one.

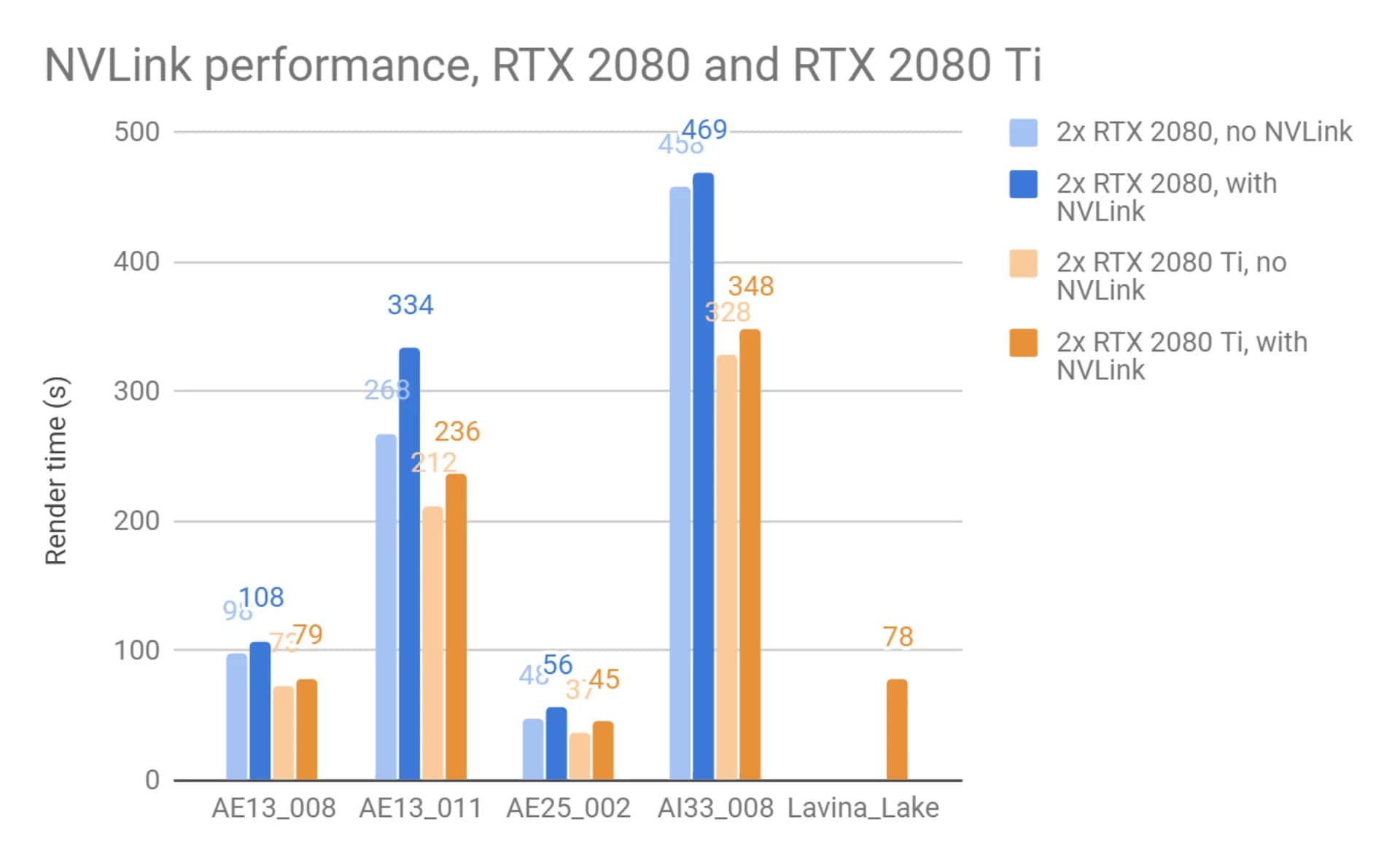

The second question is: do SLI and NVLink speed up the render times exactly like the amount of GPUs you have? The answer is unfortunately no. Using a connector and forcing your computer to distribute resources for it will make it not perfectly utilize the technology at all. Let’s look at the Chaos test of V-Ray GPU rendering, with and without NVLink.

The four first scenes see the same result, where enabling NVLink just increases the render time compared to disabling NVLink. Only the last scene can be rendered with NVLink help, due to its complexity and the 11 GB VRAM of one RTX2080Ti cannot handle.

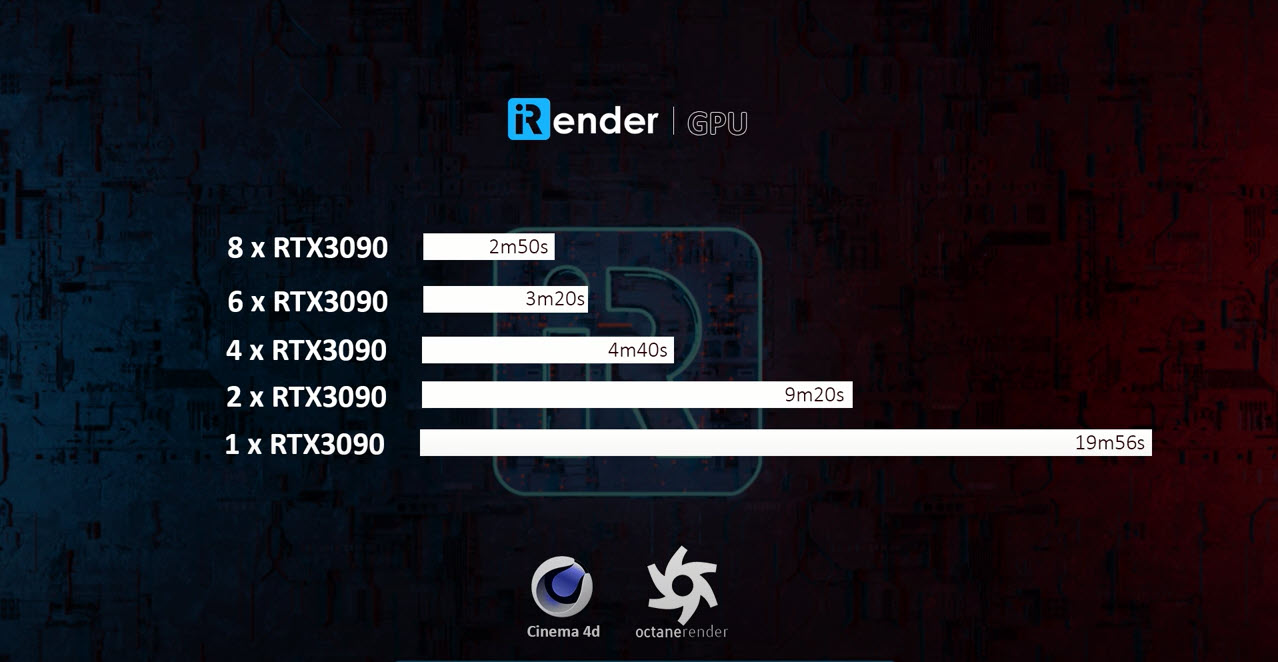

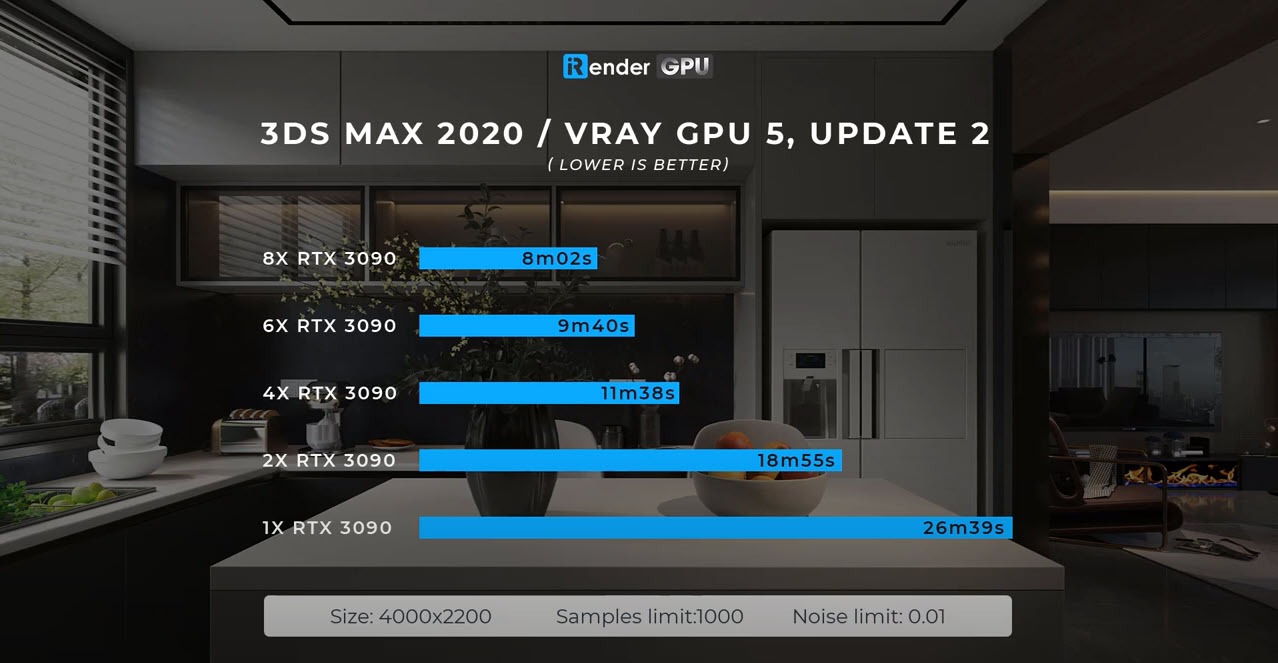

The third question is: without SLI or NVLink, will render times speed up 2x if you have 2 GPUs compared to 1 GPU? The answer is no either. This is the best case scenario, which is not easy to achieve in reality. Even the rendering engine like Octane, which claims that scaling is perfectly linear, cannot achieve it. Let’s take a look at our test for Redshift, Octane and V-Ray on our servers 1/2/4/6/8 x RTX3090:

Redshift Render Performance on Multiple RTX 3090s by iRender

Octane Render Performance on Multiple RTX 3090s by iRender

V-Ray GPU Render Performance on Multiple RTX 3090s by iRender

The render times are reduced significantly when using more GPUs, but it’s not linear in every case. With Redshift and Octane, if you use 2 GPUs, you can render 2x faster than 1 GPU. But it’s not the case for V-Ray. Or 4 GPUs rig is approximately 1.8 times faster than 2 GPUs rig when you render Redshift. Or 8 GPUs rig is about 1.6 times faster than 4 GPUs rig when you render Octane.

So you should keep in mind that more GPUs, even if you connect them or use them separately, just help you to render faster, not render perfectly linear faster.

Final words

The idea of connecting two GPUs so that they can work as one is not new, and with the unstoppable efforts, we have seen many technologies being introduced. However, till now, there is no connector which can help you to connect two (or more) GPUs and make them perfectly operate as one. NVLink, in a sense, can do that. However, the GPU can communicate with each other faster and share the VRAM, it’s far from the fact that these GPUs will become one and get you linear rendering speed.

To achieve better performance, sometimes it’s easier than you may think. Optimize your scene, reduce some of the unnecessary stuff might help, or just need to render with your GPUs without any connector like SLI or NVLink. You will need them for special cases, but in most cases, they are hurdles and you just need to stay simple.

Register an account today for 20% bonus for new users to experience our service. Or contact us via WhatsApp: (+84) 916 806 116 for advice and support.

Happy rendering!

Related Posts

The latest creative news from Redshift Cloud Rendering, Octane Cloud Rendering, Cinema 4D Cloud Rendering , 3D VFX Plugins & Cloud Rendering.